It in very general terms let's say we have a formulaįor the partial sums of S. We're going to go onĪnd on and on forever.

We could write it out a sub 1 plus a sub 2 and we're just going to go onĪnd on and on for infinity. We have an infinite series S so that's the sum from n = 1 to infinity of a sub n. An infinite sum exists iff the sequence of its partial sums converges. On the other hand, the fact that the partial sums of a series converge is in fact a sufficient condition for convergence because this is exactly what we define series convergence to be. Obviously here, the terms approach 0, (lim(□ → ∞) 1/□ = 0) but in fact, this sum diverges! So the fact that the terms of a series approach 0 is a necessary but insufficient condition for series convergence. The classic example of this is the harmonic series: It is possible for the terms of a series to converge to 0 but have the series diverge anyway.

If the terms converged to any nonzero number, then naturally, the sum diverges as the sum would keep going on and on.īut it should be noted that this is a necessary but not a sufficient condition for series convergence. This makes sense intuitively because the terms need to get smaller and smaller if we have any hope of our sum "jumping" around less and less and "settling" on a single value.

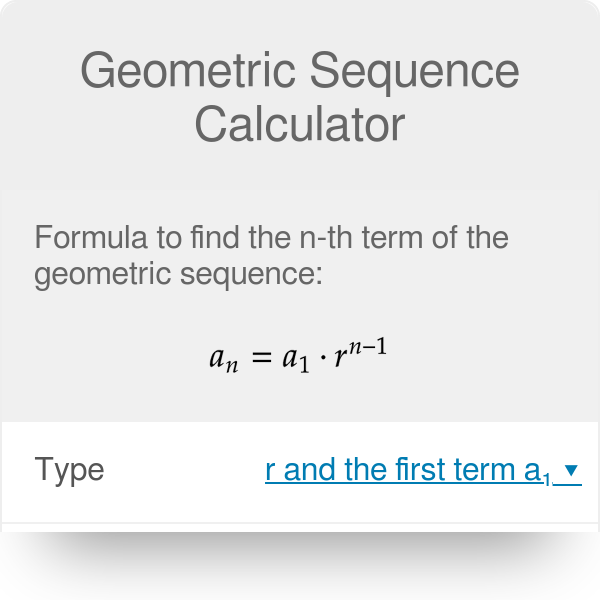

So the sum can only converge if the terms are approaching 0. Suppose that the infinite series exists at □ (i.e. However, what if lim(□ → ∞) □(□) converges? What does this say about □(□)? Well we know that: we know that if lim(□ → ∞) □(□) converges, then the infinite sum exists and is equal to that limit. Let □(□) denote the □th term in a series and let □(□) denote the □th partial sum. I feel like on AP tests they will be given though. I imagine that there are more ways but those are the ways I can think of. Then we simply do 1+3 = 2^2 to prove that there is a partial sum = n^2. This shows that given a partial sum = n^2, all partial sums after that follows that pattern. Plugging in the next n into our partial sum formula we see that (n+1)^2 = n^+2n+1, which is what we got earlier. We want to prove that n^2 = S_n, so plugging in n we see that n^2=n^2, therefore the next partial sum is the next term(2n+1) + the sum of the pervious n terms (n^2). , the partial sum looks a lot like n^2 as one does S1, S2, etc. Another is to use established partial sums to derive new ones, like a generalization of Gauss' method to any arithmetic series. One is to use algebra to deduce a formula, like Gauss did with S = 1,2,3,4.( ). Many integer sequences are well known.There are several ways. While technically, there's not much difference from any other generic mathematical sequence we can quickly calculate integer sequences by hand. If each term of a sequence is an integer number, then we are dealing with integer sequences. Īmong many types of sequences, it's worth remembering the arithmetic and the geometric sequences. A generic term in position n n n is a ( n + 1 ) a_ a ( n + 1 ) . Then, the first term of a sequence would be a 0 a_0 a 0 , followed by a 1 a_1 a 1 . The terms of a sequence are (usually) represented by the letter a a a followed by the position (or index) as subscript. Each term can be considered the output of a function where instead of an argument, we specify a position.The order in which the numbers appear matters.A numerical sequence is an ordered ( enumerated) list of numbers where:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed